前言

前面几篇文章介绍了k8s的部署、对外服务、集群网络、微服务支持,在生产环境中使用,离不开运行状态监控,本篇开始部署使用prometheus,被各大公司广泛使用的容器监控工具。

工作方式

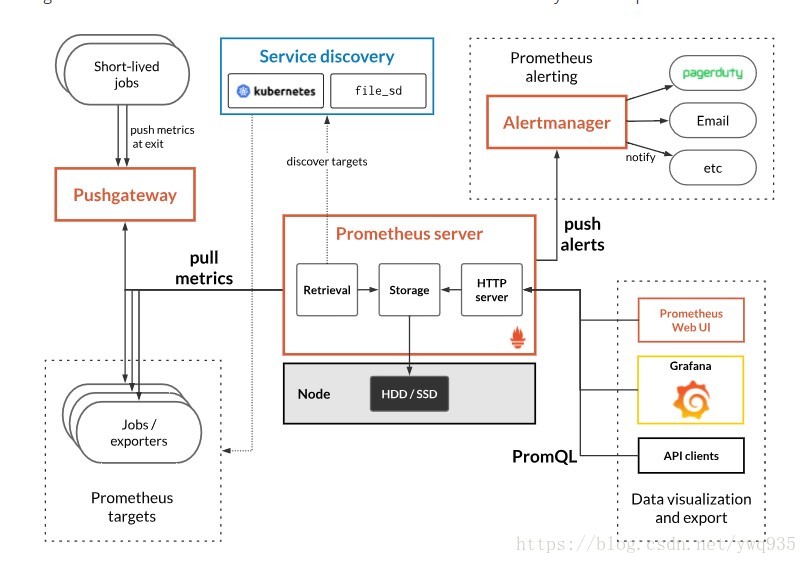

Prometheus工作示意图:

在k8s中,关于集群的资源有metrics度量值的概念,有各种不同的exporter可以通过api接口对外提供各种度量值的及时数据,prometheus在与k8s融合工作的过程,就是通过与这些提供metric值得exporter进行交互,获取数据,整合数据,展示数据,触发告警的过程。 一、获取metrics: 1.对短暂生命周期的任务,采取拉的形式获取metrics (不常见) 2.对于exporter提供的metrics,采取拉的方式获取metrics(通常方式),对接的exporter常见的有:kube-apiserver 、cadvisor、node-exporter,也可根据应用类型部署相应的exporter,获取该应用的状态信息,目前支持的应用有:nginx/haproxy/mysql/redis/memcache等。

二、数据汇总及按需获取: 可以按照官方定义的expr表达式格式,以及PromQL语法对相应的指标进程过滤,数据展示及图形展示。不过自带的webui较为简陋,但prometheus同时提供获取数据的api,grafana可通过api获取prometheus数据源,来绘制更精细的图形效果用以展示。

expr书写格式及语法参考官方文档: https://prometheus.io/docs/prometheus/latest/querying/basics/

三、告警推送 prometheus支持多种告警媒介,对满足条件的告警自动触发告警,并可对告警的发送规则进行定制,例如重复间隔、路由等,可以实现非常灵活的告警触发。

部署

1.配置configmap,在部署前将Prometheus主程序配置文件准备好,以configmap的形式挂载进deployment中。 prometheus-configmap.yaml:

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

rule_files:

- /etc/prometheus/rules.yml

alerting:

alertmanagers:

- static_configs:

- targets: ["alertmanager:9093"]

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

- job_name: 'kubernetes-node-exporter'

scheme: http

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_role]

action: replace

target_label: kubernetes_role

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:31672'

target_label: __address__

2.部署prometheus工作主程序,注意挂载上面的configmap: prometheus.deploy.yml:

apiVersion: apps/v1beta2

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:v2.0.0

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config

3.部署svc、ingress、rbac授权。 注意:在本地是使用traefik做对外服务代理的,因此修改了默认的NodePort的svc.type为ClusterIP的方式,添加ingress后,可以以域名方式直接访问。若不做代理,可以无需部署ingress,svc.type使用默认的NodePort。 prometheus.svc.yaml:

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

targetPort: 9090

selector:

app: prometheus

prometheus.ing.yaml:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: prometheus

namespace: kube-system

selfLink: /apis/extensions/v1beta1/namespaces/default/ingresses/prometheus

spec:

rules:

- host: prometheusv19.abc.com

http:

paths:

- backend:

serviceName: prometheus

servicePort: 80

path: /

rbac-setup.yaml:

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

依次部署上方几个yaml文件,待初始化完成后,配置好dns记录,即可打开浏览器访问:

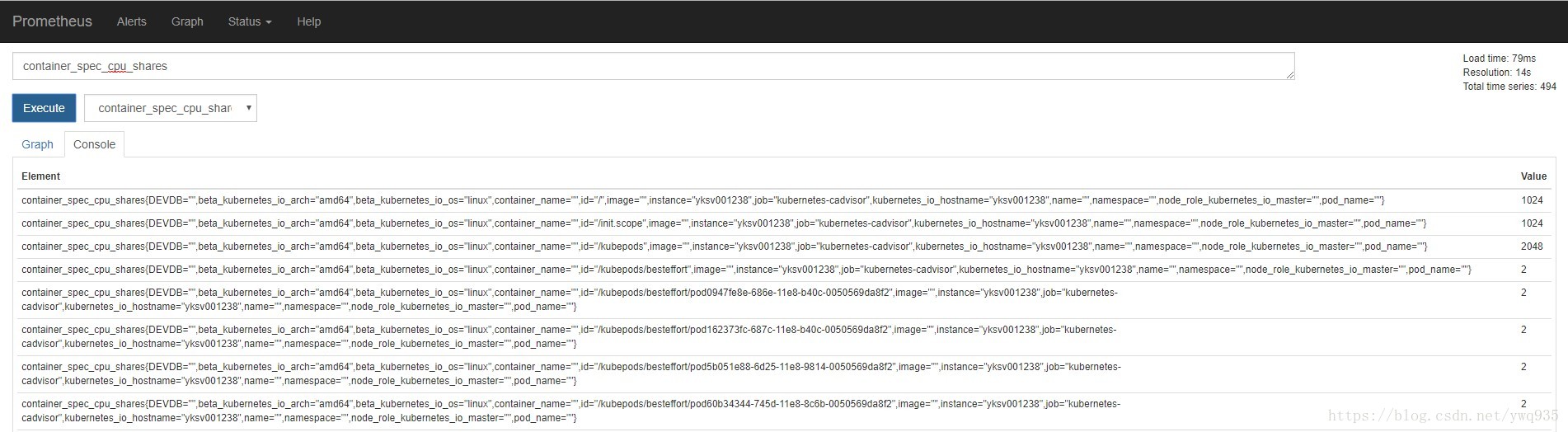

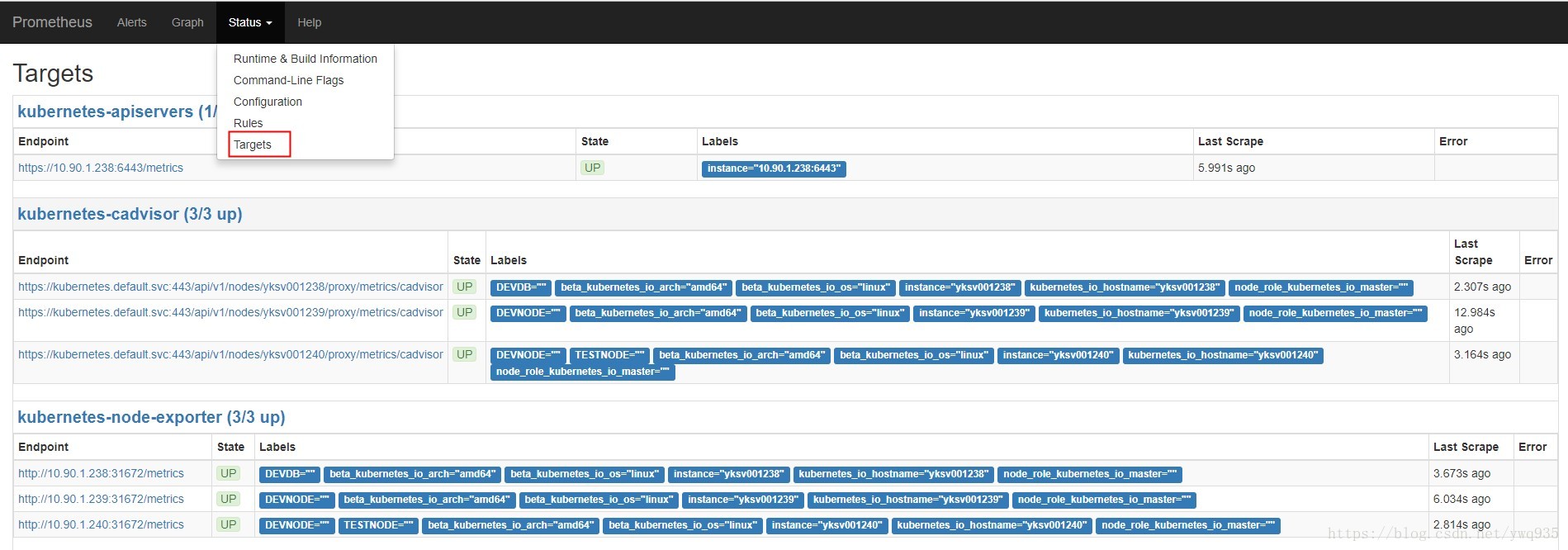

随便选取一个metric,点击execute,查看是否能正常获取结果输出。点击status---target,可以看到metrics的数据来源,即各exporter,点击相应exporter上的链接可查看这个exporter提供的metrics明细。

随便选取一个metric,点击execute,查看是否能正常获取结果输出。点击status---target,可以看到metrics的数据来源,即各exporter,点击相应exporter上的链接可查看这个exporter提供的metrics明细。

为了更好的展示图形效果,需要部署grafana,因此前已经部署有grafana,这里不再部署,贴一个all-in-one.yaml部署文件。 grafana-all-in-one.yaml:

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: grafana-core

namespace: kube-system

labels:

app: grafana

component: core

spec:

replicas: 1

template:

metadata:

labels:

app: grafana

component: core

spec:

containers:

- image: grafana/grafana:4.2.0

name: grafana-core

imagePullPolicy: IfNotPresent

# env:

resources:

# keep request = limit to keep this container in guaranteed class

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

env:

# The following env variables set up basic auth twith the default admin user and admin password.

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "false"

# - name: GF_AUTH_ANONYMOUS_ORG_ROLE

# value: Admin

# does not really work, because of template variables in exported dashboards:

# - name: GF_DASHBOARDS_JSON_ENABLED

# value: "true"

readinessProbe:

httpGet:

path: /login

port: 3000

# initialDelaySeconds: 30

# timeoutSeconds: 1

volumeMounts:

- name: grafana-persistent-storage

mountPath: /var

volumes:

- name: grafana-persistent-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

selector:

app: grafana

component: core

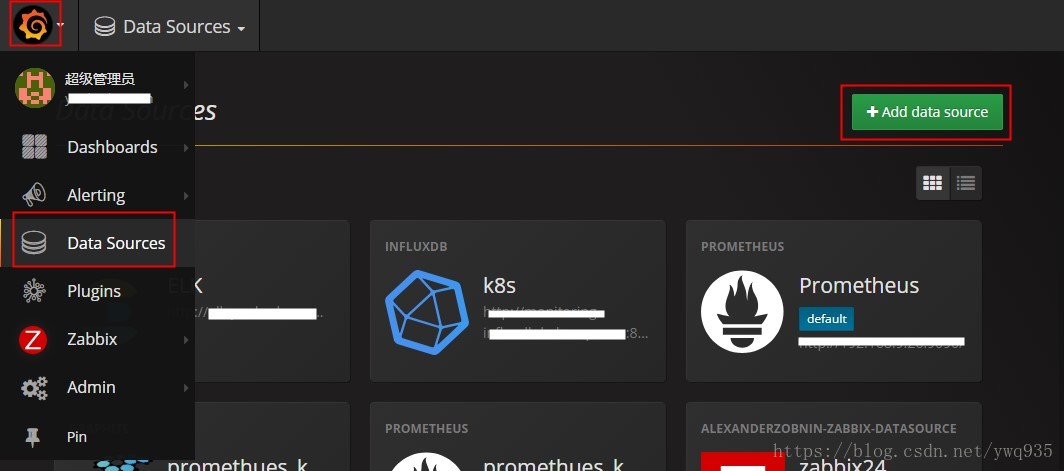

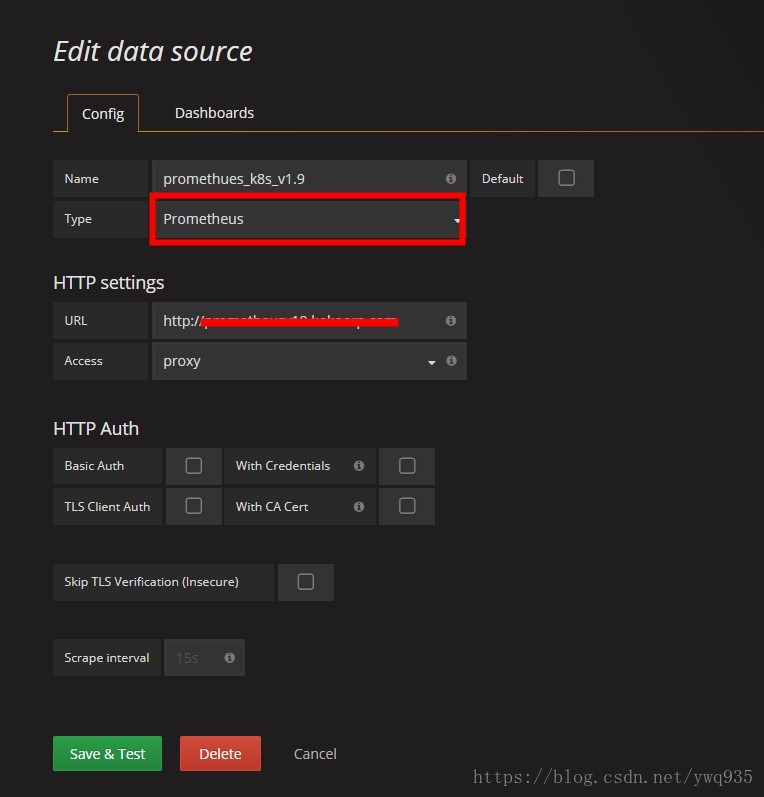

访问grafana,添加prometheus数据源:

默认管理账号密码为admin admin

选择资源类型,填入prometheus的服务地址及端口号,点击保存

选择资源类型,填入prometheus的服务地址及端口号,点击保存

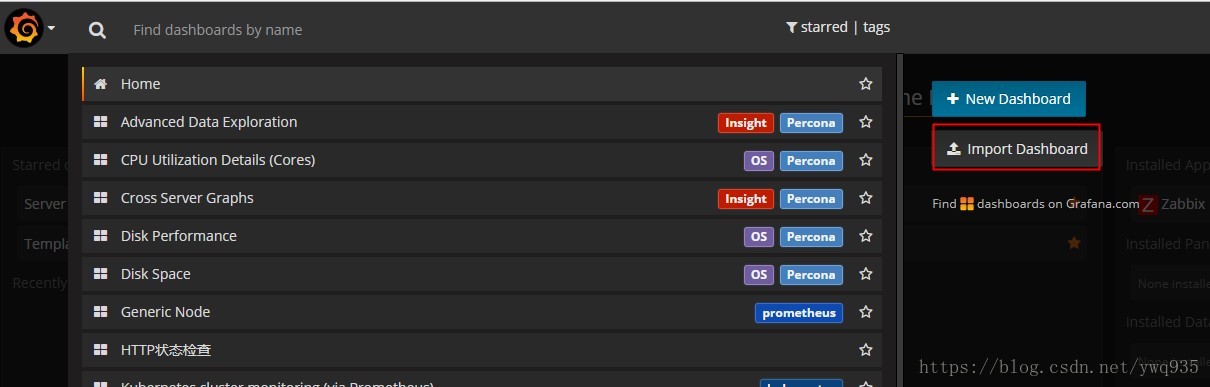

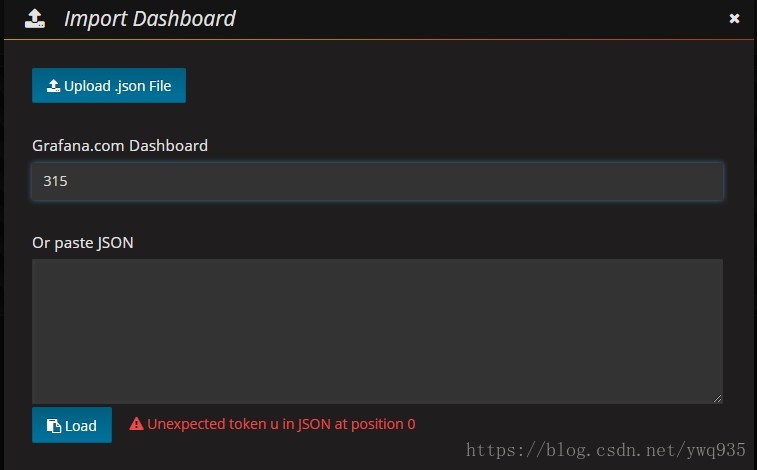

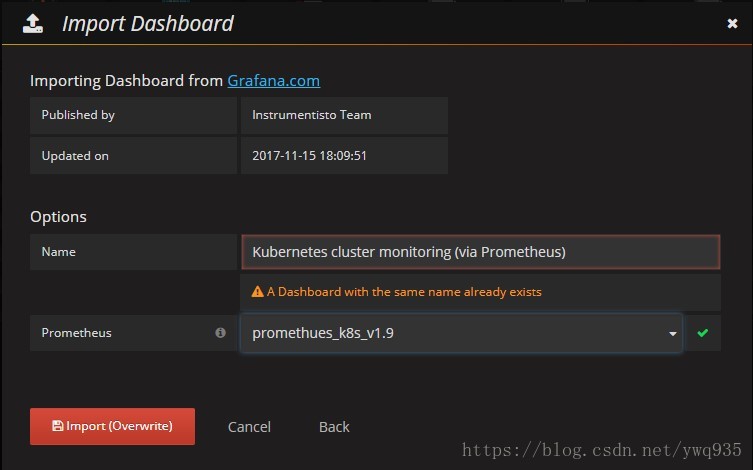

导入展示模板:

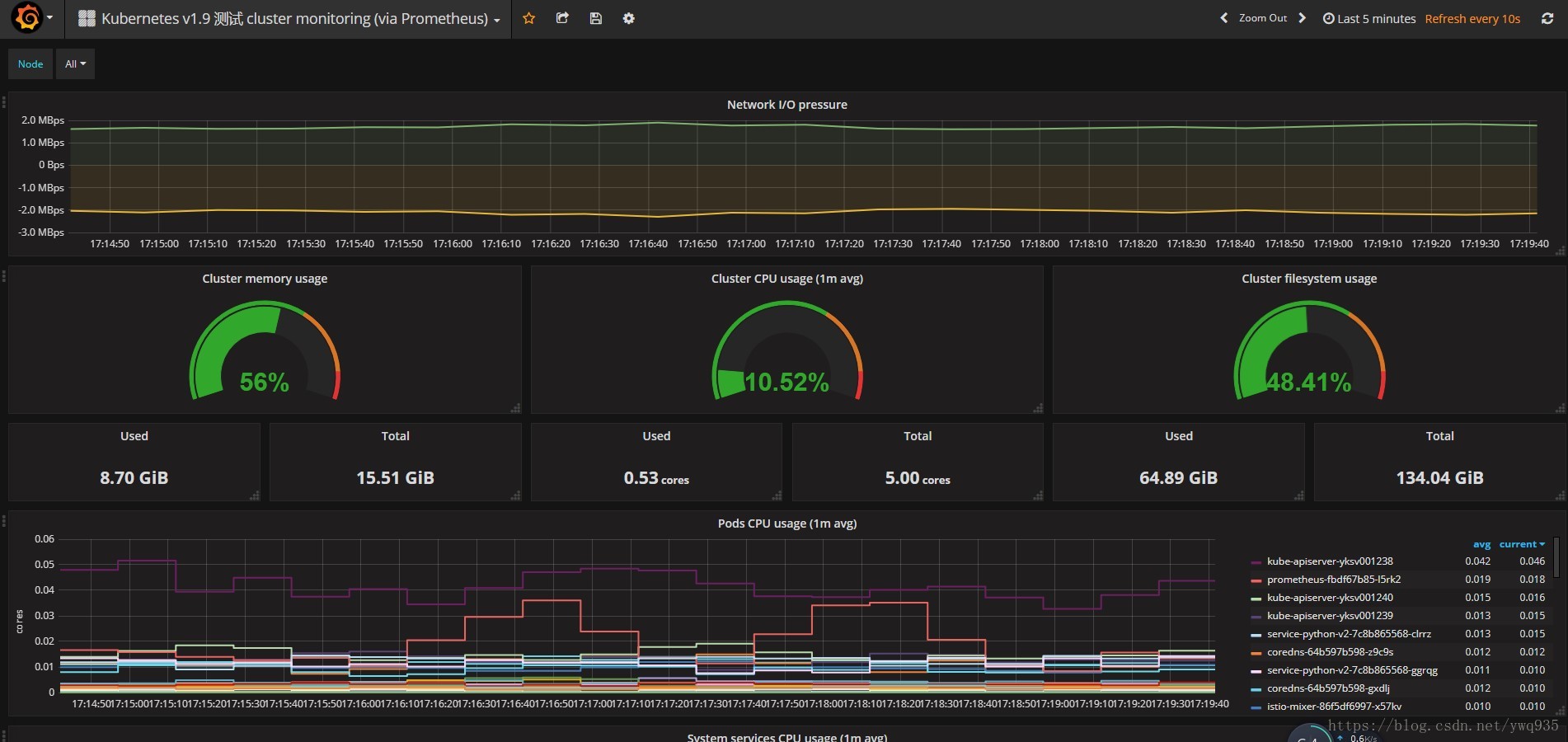

点击dashboard,点击import dashboard,在弹出框内填写数字315,会自动加载官方提供的315号模板,然后选择数据源为刚添加的数据源,模板就创建好了,非常easy。

基本部署到这里就结束了,下篇介绍一下prometheus的告警相关规则。

===========================================================================================

7.19更新:

最近发现,采用daemon-set方式部署的node-exporterc采集到的度量值不准确,最后发现需要将host的/proc和/sys目录挂载进node-exporter的容器内。 更新后的node-exporter.yaml文件:

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: node-exporter

namespace: default

labels:

k8s-app: node-exporter

spec:

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

hostPort: 9101

volumeMounts:

- mountPath: /host/proc

name: proc

- mountPath: /host/sys

name: sys

args:

- --path.procfs=/host/proc

- --path.sysfs=/host/sys

- --collector.filesystem.ignored-mount-points

- "^/(dev|proc|sys|host|etc|rootfs|docker)($|/)"

volumes:

- hostPath:

path: /proc

name: proc

- hostPath:

path: /sys

name: sys

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: default

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type````````````

NodePort selector: k8s-app: node-exporter

但是发现,部署完成之后,采集到的node指标依然不准确,非常奇怪,尝试脱离k8s使用docker方式直接部署,结果采集到的node数值就很准确了,有点不明白原因,后续继续排查一下。

docker运行命令:

docker run -d \ -p 9100:9100 \ --name node-exporter \ -v "/proc:/host/proc" \ -v "/sys:/host/sys" \ -v "/:/rootfs" \ --net="host" \ prom/node-exporter:v0.14.0 \ -collector.procfs /host/proc \ -collector.sysfs /host/sys \ -collector.filesystem.ignored-mount-points "^/(sys|proc|dev|host|etc)($|/)" ```

最后,记得修改configmap内的job相关targets配置。

为什么依附于k8s集群内采集的node指标就不准确,这个问题后续得好好研究,这次先到这里。